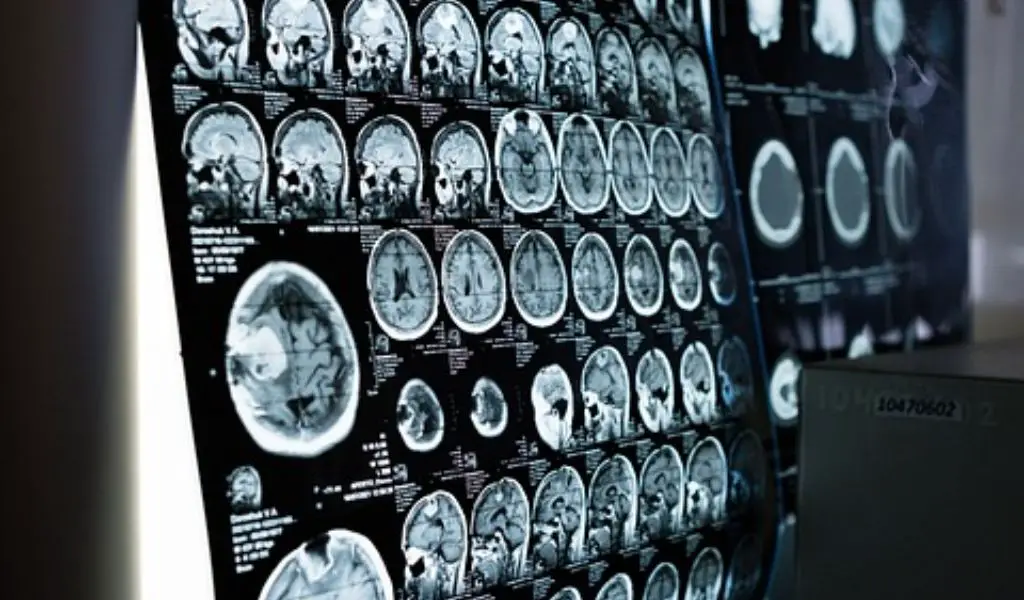

I recently found myself lying on my back in the cramped confines of an fMRI machine at a research facility in Austin, Texas, wearing an ill-fitting pair of scrubs. “The things I do for TV,” I mused.

Anyone who’s had an MRI or fMRI can attest to how loud it is; when electrical currents swirl, a strong magnetic field is created, resulting in a full scan of your brain. This time, however, I was given a specialized pair of headphones that started playing clips from The Wizard of Oz audiobook. Despite this, I could only make out the loud starting of the mechanical magnets.

Because?

Using the same artificial intelligence system that powers the innovative ChatGPT chatbot, neuroscientists at the University of Texas at Austin have discovered a way to translate brain scans into speech.

The development could fundamentally alter the way mutes can communicate. It’s just one of many innovative uses of AI that have emerged in recent months as the field continues to improve and seems poised to influence every aspect of our lives and society.

Alexander Huth, an assistant professor of neurology and computer science at the University of Texas at Austin, explained to me, “So we don’t want to use the phrase mind reading. “We think it evokes abilities that are really beyond our reach.”

Huth volunteered to participate in the study, spending more than 20 hours inside an fMRI scanner listening to audio samples as the device took precise images of his brain.

After studying his brain activity and the sounds he heard,

Photo Credit: Unsplash via desygner.com

an artificial intelligence model was finally able to anticipate the words he was hearing just by monitoring his brain.

The researchers employed San Francisco-based startup OpenAI’s GPT-1 language model, which was created using a sizeable collection of books and websites. The model figured out how sentences are formed by analyzing all of this data, which is essentially how people talk and think.

The scientists programmed the AI to examine the brain activity of Huth and other participants when they heard particular words. After a while, the AI had acquired enough knowledge to be able to infer from the brain activity of Huth and others what they were hearing or seeing.

I was in the machine for less than half an hour and, as I feared, the AI was unable to discern that I had been listening to the passage from The Wizard of Oz audiobook that talked about Dorothy traveling down the yellow brick road.

Huth was listening to the same audio, but because the AI model had been trained on his brain, it could accurately predict certain passages of the audio.

Although the technology is still in its infancy and has immense potential, some people may find comfort in the restrictions. However, AI cannot simply understand our minds.

Huth stated that the real potential use of this is to help those who cannot communicate.

He and other UT Austin researchers believe the innovative technology may one day be used by patients with “locked-in” syndrome, stroke victims and others whose brains still work but are mute.

“Ours is the first instance where we have shown that without brain surgery, we can get this level of precision. So we think this is like the first step to genuinely helping the mute without requiring neurosurgery,” he said.

Great news for those with crippling illnesses to be sure, but groundbreaking medical advances raise concerns about how technology might be used in controversial situations.

Could it be used to force a prisoner to confess? Or to reveal our darkest and secret secrets?

The quick answer, according to Huth and his coworkers, is no, not now.

For starters, fMRI machines must be used for brain scans, hours must be spent training AI in a subject’s brain, and, according to Texas researchers, subjects must give consent. Brain scans will not be successful if a person deliberately avoids listening to noise or is preoccupied with anything else.

According to Jerry Tang, the lead author of a report outlining his team’s findings that was published earlier this month, “we believe that everyone’s brain data should be kept private.” One of the last unexplored areas of our privacy is our brain.

According to Tang, “obviously, there are concerns that brain-decoding technology could be used in dangerous ways.” Researchers prefer the term “brain decoding” to “mind reading.”

“Mind reading makes me think of the idea of getting to the minor ideas that you don’t want to admit, minor reactions to things. And I don’t think anyone has suggested that we can actually achieve that using this method, Huth said. “What we can acquire are the general concepts you are considering. If you try to tell a story to yourself, we can get to the story someone else is telling you.

The creators of the generative AI systems, including Sam Altman, CEO of OpenAI, flocked to the Capitol this week to testify before a Senate committee in response to concerns from lawmakers about the dangers posed by the powerful technology. Altman warned that the unbridled growth of AI could “cause significant harm to the world” and encouraged lawmakers to enact rules to allay concerns.

Tang told CNN that politicians need to take “mental privacy” seriously to preserve “brain data” (our thoughts) in the AI age, echoing the AI warning. These are two of the most apocalyptic expressions I have ever heard.

Although the technology now only works in a relatively small number of situations, that may not always be the case.

“It is important not to have a false sense of security and think that things will be like this forever,” Tang warned. “As technology advances, it can affect both our ability to decode and whether decoders need human cooperation.”

Subscribe to our latest newsletter

To read our exclusive content, sign up now. $5/month, $50/year

Categories: Technology

Source: vtt.edu.vn