Computers are increasingly capable of simulating reality. Media generated by artificial intelligence (AI) has been generating headlines, particularly films designed to impersonate someone, making it appear as if they are saying or doing something they are not.

A Twitch streamer was discovered on a website known for creating artificial intelligence-generated pornography of his peers. A group of teenagers in New York filmed their principal hurling racist insults and threatening students.

In Venezuela, the videos created are allegedly used to spread political propaganda. In all three situations, the AI-generated movies were created with the intention of convincing people that someone did something, which they didn’t actually do. Deepfake is a term for this type of content.

Jump to

![]()

- What is deepfake?

- How is Deepfake different from other online manipulations?

- How to detect a deepfake?

What is deepfake?

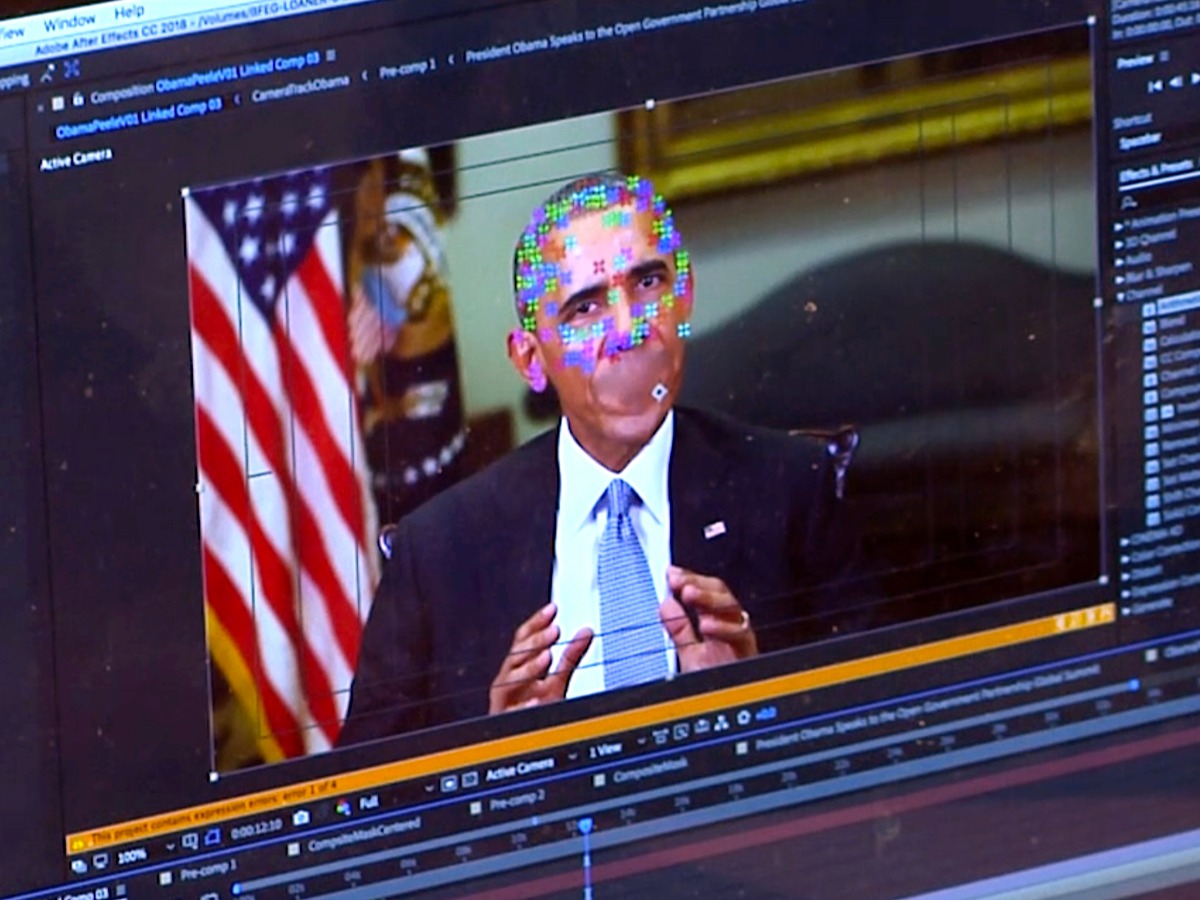

Deepfakes use artificial intelligence to create completely new videos or audio with the aim of showing something that did not happen in reality.

The term “deepfake” refers to the underlying technology (deep learning algorithms) that can be used to create fake material of real people teaching themselves to solve problems using huge amounts of data.

How is Deepfake different from other online manipulations?

Deepfakes are not just any false or misleading images. The Pope in a puffy jacket generated by AI and the fake scenes of Donald Trump’s arrest that were released shortly before his impeachment are generated by AI, but they are not deepfakes. (When images like these are combined with false information, they are called “shallow fakes.”)

The element of human intervention distinguishes a deepfake. When it comes to deepfakes, the user can only decide if what was created is what they want or not at the end of the generation process; Other than adapting the training data and saying “yes” or “no” to what the computer generates after the fact, they have no say in how the computer chooses to do it.

How to detect a deepfake?

AP

AP

Deepfakes can be identified by a few indicators: Do the details seem confusing or obscure? Look for skin or hair problems, as well as faces that appear blurrier than the environment they are in.

Focus may appear abnormally soft. Is the lighting artificial? Deepfake algorithms often keep the lighting of the clips used as models for the fake video, which does not match the lighting of the target video.

Do the words or noises contradict the images? The audio may not appear to match the person, especially if the video was spoofed but the original audio was not modified as meticulously.

Is the source reliable? Reverse image search is a technique frequently used by journalists and academics to determine the true source of an image, and it’s something you can use right now. You should also investigate who posted the photo, where they posted it, and whether it makes sense for them to do so.

What do you think about this? Tell us in the comments.

Header image/Facebook image credit: AP

For more trending stories, follow us on Telegram.

Categories: Trending

Source: vtt.edu.vn